How AI is transforming TV and video

Eden Zoller, Chief Analyst

Artificial Intelligence (AI) describes advanced technologies and algorithmic modelling that can enable a growing range of high value applications and use cases. Most AI systems are based on machine learning (ML) and deep learning (DL) models, with the latter being a more sophisticated approach based on neural networks. ML and DL models “learn” from the data they process, in a supervised or unsupervised manner, and become more efficient with greater exposure to data. ML and DL apply these learnings to make predictions, classify data, recognize objects or images, and comprehend speech or text. AI encompasses a range of technologies with important areas including visual AI (e.g. objecting, image and facial recognition) and voice AI (e.g. voice recognition, natural language processing and understanding). But those just scratch the surface of the utility that intelligent, learning systems can deliver back to media and advertising businesses.

“AI [is enhancing] how consumers engage with TV and video by making recommendations more contextually relevant . . . “

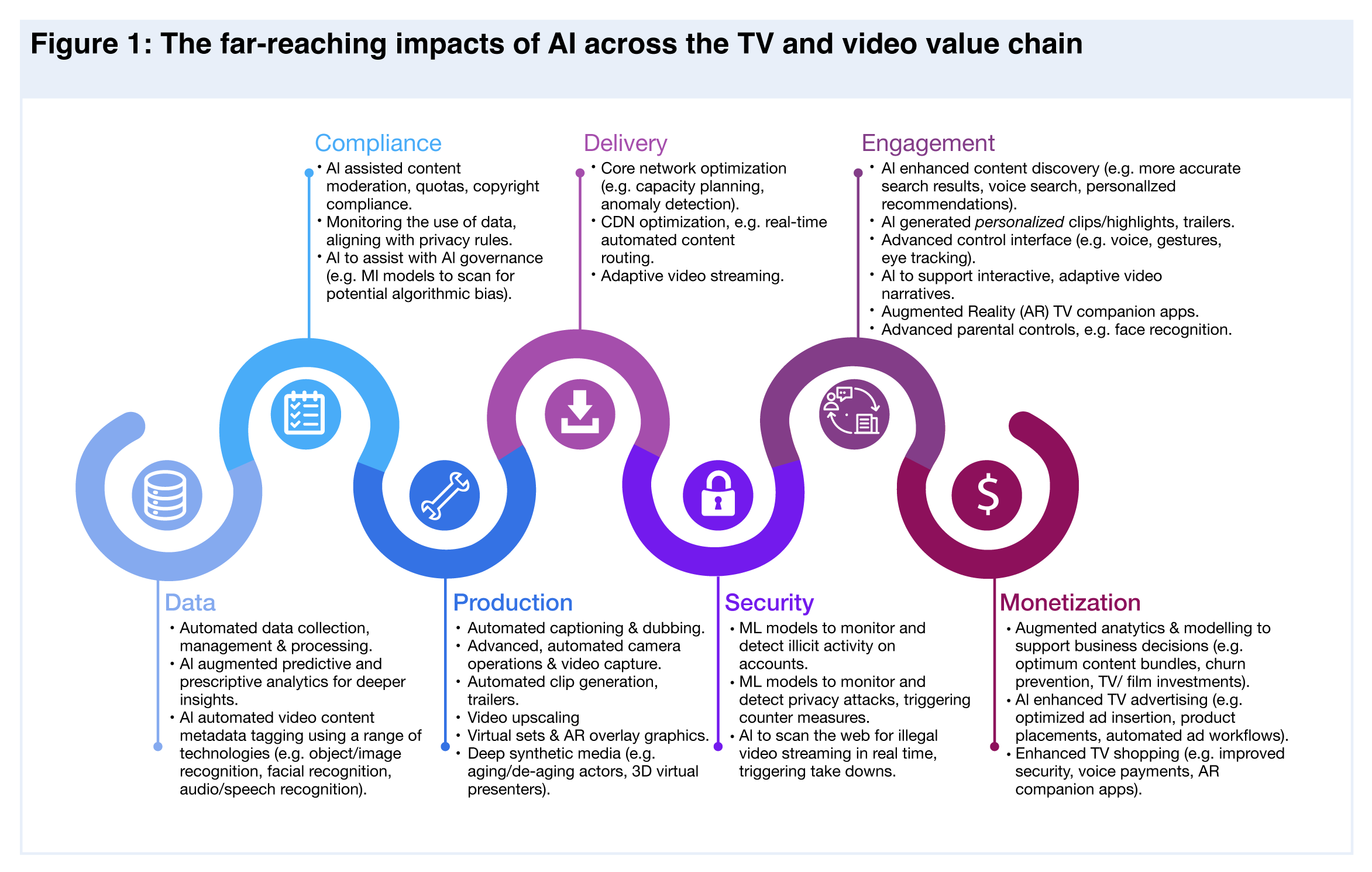

AI is having a profound impact on TV and video, touching every domain (as shown in Figure 1) and bringing multiple benefits. For example, in the data domain, AI is changing the stakes in analytics with ML models that can interrogate data in real time to reveal more granular, actionable insights. AI is enabling the the automation of media assets and production workflows, while having a transformative effect on video content metadata tagging that enables multiple use cases. AI is supporting content compliance, for example, with ML algorithms can be trained to identify and tag content that is subject to restrictions. At the same time, AI is helping to improve TV and video production, for example advanced dubbing techniques and automated clip generation. When it comes to delivery, AI-powered network resource planning, orchestration and fault detection can improve network efficiency and optimize performance. AI is also enhancing how consumers engage with TV and video, by making recommendations more contextually relevant through providing new types of user interfaces, (e.g. voice and gesture control) and with Augmented Reality (AR) applications that can make content more interactive and immersive. Alongside all this, AI is helping to improve monetization opportunities, for example by enabling more relevant, contextual ad placements and by driving advances in shoppable TV.

AI-powered data transformation

The use of analytics in TV and video is of course not new, but what is changing the stakes is data management and analytics solutions augmented by AI, as outlined below.

Taking the pain out of data management

TV and video data is high-volume, fragmented, and of variable quality, all of which make data processing difficult and time consuming. AI automation tools are highly efficient at managing large data sets at scale, at speed, and in real time – critical in the case of live broadcast.

Improved data quality control

ML algorithms can be trained to maintain the quality and integrity of both incoming and outgoing data sets. For example, ML algorithms can be trained to detect anomalies and noise in data sets; to identify and repair missing data.

Augmented analytics works smarter and faster

ML algorithms are dramatically improving what analytics can do, and as a consequence also improving the insights and outcomes that can be achieved – insights which can be used to support multiple use cases and improve business decisions. For example:

- ML models can interrogate data in real time to reveal hitherto unidentified connections and trends that traditional analytics cannot surface.

- AI-augmented analytics is adept at extracting meaning from unstructured data, which is most of video data.

- Algorithms can be fine-tuned and trained for very specific, deep data queries, which means more granular, targeted results.

Smart metadata tagging is at the heart of everything

Content metadata tagging involves analyzing video content in order to recognize, classify, and label elements of that content. This process creates metadata information that makes content data searchable and actionable. Videos contain massive amounts of content data, as highlighted in Table 1, which is by no means a finite list.

AI-powered content data tagging solutions are transformative

The volume, variety and granularity of video content makes manual metadata tagging slow, inaccurate and/or only high level. In contrast, AI automated content tagging solutions can operate at speed, scale, and produce very granular results. ML algorithms can be trained to identify and automatically tag different elements of video content, using a range of AI technology including facial recognition, audio/speech recognition and sentiment/emotion recognition. Video content metadata tagging can enable and enhance multiple use cases, of which key examples are summarized in Table 2.Powerful services increasing accessibility and monetization

Subtitles and dubbing also have a strong monetization aspect by making local content available to international markets. A viewer might not understand the original language, or have hearing difficulties (subtitles) or visual challenges (dubbing). It is not surprising then that these services are invaluable to consumers, as shown by Omdia’s Devices, Media and Usage survey results seen in Figure 2. Subtitles and dubbing also have a strong monetization aspect, makes local content available to international markets.

Subtitles/captioning

In the case of subtitles, AI-automated captioning leverages automatic speech recognition (ASR). ML algorithms are trained on a range of speech and other sound inputs (e.g. laughter, breaking glass, an explosion) to recognize patterns and meaning, and to automatically produce text outputs (transcripts, the captions/subtitles). In the case of TV, film and live broadcast, ASR models must be trained to recognize millions of words in many different languages in multiple contexts.

Language dubbing

Dubbing describes the process where original language dialogue is translated into a new language with synchronized lip/mouth movements. Traditional approaches to dubbing (hiring local actors to learn the role and voice the translated script) is an expensive, lengthy process that typically produces imperfect results and at worst, can spoil the viewing experience. AI solutions are driving significant improvements, with cutting edge dubbing solutions drawing on deep generative modeling techniques. This can involve using an actor’s original voice as an input into a generative model that creates a synthetic voice that can form words in a new language but based on the same authentic pitch and timbre as the original actor’s voice. An alternative approach is based on visual rather than verbal dubbing, where deep generative modelling creates a synthetic version of the actor’s face speaking the target language, with lip and facial movements that correspond with the exact formations/expressions used by a native speaker.

Automated Clips and Trailers

Fine-tuning the process for faster results

Clip highlights are a staple of the viewing experience. AI can be used to automatically generate clips from a wide variety of content sources: sporting events, a rolling news story, reality TV, etc. ML models essentially act like a production editor, and must be trained to recognize, classify and compile clips based on defined criteria and elements in a specific context. The various factors that can potentially comprise a desired clip can be scored and ranked, which means the automated clips should contain optimum elements. Hundreds of clips can be generated in this way and the results be presented in a dashboard to a human editor that has the final say.

Well-executed trailers are a powerful promotional tool, giving consumers a first taste of what is available and acting as a hook to pull them into the full cut of the content. Creating trailers using traditional production methods involves human editors having to scrutinize hours of film footage and manually selecting potential sequences for a trailer. This is where intelligent, learning workflows can help, via ML models that can be trained to identify the most appealing, winning elements for a trailer. Algorithms are trained on trailers for similar TV programs or films, along with associated data inputs such as user feedback and reviews. Algorithms must learn to identify characters, objects, scenery and music in the film; to recognize when sequences are designed to be exciting, frightening, romantic, funny and so on.

Augmented Reality (AR) is the layering of 2D or 3D graphics onto real world environments and objects, augmenting the latter with contextual information and entertainment. AR is already used in broadcast TV, with AR graphics placed as overlays on a real or virtual sets alongside human presenters/actors that interact with the graphics. For example, the graphic overlays used by a news anchor to relay complex data in easy to a digest format (e.g. charts/graphs summarizing economic data).

The AR graphics software/engine must be able to recognise and understand the real world so that the virtual content and physical environment can react with each other in exactly the right way in real time (e.g. correct scale, pose, location awareness). This is where computer vision and ML play a central role. AR software draws on ML algorithms that have been trained to recognize objects in the world around the user from the content of the camera feed. The recognized object triggers the AR software to render the relevant graphics overlay, and it must do so with precision and in real time.

Consumers want high quality video services and will switch if they don’t get it. In this context it is not surprising that when it comes to the application of AI in TV and video, consumers place a high priority on AI that can improve viewing quality, such as bandwidth optimization and image upscaling, as shown in Figure 3.

- Resource planning and orchestration. ML algorithms can interrogate network data and learn to predict when and where network capacity will be under pressure. For example, during a major sporting event or the launch of a much-anticipated “binge watchable” TV series. AI can predict the increased strain and automatically direct and scale up resources as required.

- Fault detection. ML models can be trained to quickly identify in real time unusual patterns and anomalies that are indicative of abnormal network behavior, helping to identify and fix issues before they escalate and cause problems. The ideal scenario is that networks achieve a level of automation that allows them to self-heal without human intervention.

Smarter Multi-CDN architecture

AI can also boost the performance of Content Delivery Networks (CDNs) by intelligently automating content routing in real time to the optimal edge server, which is more efficient than rules-based delivery and so further minimizes latency for data transfer. The same approach can be used for multi-CDNs – i.e. algorithms that determine the best CDN to use. And just as with core networks, ML models can be trained to analyse traffic flows and capacity, identify anomalies and take appropriate action.

Surfacing the right video content is harder, and more important than ever

Consumers need a shorter, straighter path to the content that resonates with them. Video libraries are expanding at a breakneck pace, magnified by the rise of super aggregation models from the likes of Amazon, Sky (Sky Q) and Roku. Unified content discovery is a real challenge in the context of super aggregation, as diverse content assets from different providers typically means disparate metadata tagging systems that need to be normalized.

Improving the understanding of search intent

We have already looked at how AI is transforming video content metadata tagging and noted that this can benefit search by making results more accurate. Another important way that AI is enhancing search is through advances in Natural Language Processing (NLP) techniques that better understand the intent behind a search query, and by improving the accuracy of complex queries.

Voice search has potential but is complex

Asking for information, recommendations and posing queries with spoken words can be easier and faster than navigating through an electronic program guide or menu system – particularly for people with visual challenges. Voice search could also be used to find specific sections of a video/TV program based on a spoken description. Taken together, this puts the voice interface in a good position to act as a unified tool for content discovery.

The central role of recommendations

Large content libraries are positive in that consumers have more choice, but can be negative if the amount of content overwhelms people to the point of option “paralysis”, where people cannot decide what to watch, get frustrated and end up watching nothing. In this context, the role of contextually relevant, personalized recommendations is more important than ever before. However, effective recommendations of this kind are hard to execute well, evidenced by the fact that recommendations often miss the mark with consumers. The level of personalization provided by recommendation engines depends on the breadth and quality of the available of data. Amazon, for example, is extremely data rich, not only because of the size of Amazon Video/Prime Video in terms of users and content, but because of the additional data insights it can bring into the mix from users interacting with other Amazon assets (Amazon marketplace, Alexa voice assistant, Amazon’s audio books, music, games etc.). The data available must also be accurately and consistently labelled, which is where video content metadata tagging solutions play a critical role. Moreover, the ML models that underpin recommendation engines need to be increasingly sophisticated, able to scale and of course, well trained. Looking at Amazon again by way of example, it uses cutting edge approaches such as graph neural networks (GNNs) that allow it to connect information about its content and customers from a variety of sources and to process data sets at scale.

AI to drive segmented trailers

Standard movie trailers are typically based on a couple of compilations that highlight different aspects of the movie, for example a trailer that homes in on the lead actor or plays up a film’s high-octane credentials. These compilations are high-level and not created to align with, and appeal to different audience segments. We have already seen how AI can be used to automate the generation of clips and trailers, but AI can take this a step further by enabling the production of personalized short form content assets. The basic idea is to use AI for advanced customer segmentation modelling, with automated labelling of the segment attributes. In parallel with this, the original video content assets are automatically tagged, with algorithms trained to identify and extract content assets that best match the preferences and needs of a defined user segment(s).

Towards user-driven adaptive video content

A powerful form of personalization are formats that allow viewers to tailor the plot narrative and ending to their own tastes. In December 2018 Netflix took this approach with Black Mirror: Bandersnatch (Bandersnatch). The dystopian program allowed viewers to select between different actions for the protagonist at different points in the story, with their choice sending the narrative along different branching paths. Formats like Bandersnatch are a novel, interactive experience and are designed to encourage repeat viewing as fans explore the alternative storylines.

AI as an aid to adaptive program design and implementation

The narrative alternatives in Bandersnatch were not auto-generated by AI, but rather teams of experienced scriptwriters and producers. But where AI can help with these types of complex, non-linear, interactive formats is by helping to manage content assets and automate workflows. For example, through metadata tagging that is used to locate quickly and precisely where to trigger a decision tree function in the story, and to automatically select and load the corresponding video content. Algorithms can be trained to track and “remember” the viewers choices and the personalized story lines, which in turn provides a rich seam of data for future recommendations.

AR TV companion applications

We have looked at how augmented reality (AR) overlays can be used to enhance virtual sets and broadcast programming. Alongside these, AR can be used to enhance viewer interactivity with the means of doing so controlled by consumers via AR companion applications, typically on smartphones. AR applications are becoming popular with consumers and the volumes of AR app downloads is steadily growing, as shown in Figure 4.

Non-synchronized AR TV applications

Applications of this kind are usually positioned as promotional tools for a film or TV program where the app is not synched with the show as viewers play it on the main screen. This is by far the most common approach and is also used for other use cases besides TV, including retail.

Synchronized AR TV applications

Interactive AR graphics can be layered over the TV screen or displayed alongside it, with the AR content synched with the corresponding video/TV content as it is played. AR TV applications of this kind must be able to recognize the TV screen within the camera feed, recognise content and/or time stamps in the video/TV program being played, and to synchronise AR overlays with specified moments in the content. This all relies on computer vision algorithms that have been trained to detect video frames and objects within frames, which in turn relies on robust video content metadata tagging. There is a long list of potential use cases for synchronized AR TV applications that the most prevalent being to provide additional information relating to a program (e.g. actors in a film, footballers in a match, reality TV stars). But it can also include synchronized entertainment and fun features – animations, music, AR stickers, mini-quizzes, polls.

AI cannot conjure the perfect business model, but advanced analytics can help by providing insights that can make business decisions better informed. For example, ML algorithms can be used to fine-tune existing segmentation models or surface attributes that reveal completely new clusters, with models dynamically updated based on changing market trends and customer behavior. This enables multiple business scenarios, including segmented, targeted video bundles in terms of the content, streaming quality (SD, HD, 4K), additional services (e.g. music, games) and pricing (e.g. ad supported, subscriptions, tiered pricing).

Propensity modeling is improved thanks to ML algorithms that can more accurately predict the probability of customer intent and work to a more granular level. The ability to understand customer intent confers huge competitive advantages as it can provide insights into the type of content and features that will appeal most to customers and also indicate their propensity to churn. Accurate insights into churn trajectories are particularly useful for streaming video platforms, since churn rates are high and unpredictable.

Informing content investment

A recent development is the use of AI for film and TV investment decisions, but in our view solutions of this kind should be viewed as assistive tools only. ML predictive modelling can be applied to data related to a proposed film or TV program to surface insights that indicate how successful it might be, and therefore if it will provide a decent return on content investments. Relevant data points include script analysis, popularity of the main actors, audience viewing stats, the success of similar films or TV programs. AI solutions of this kind can interrogate and model multiple data inputs at speeds that human teams cannot match. This could be useful if executives need to make investment decisions in a hurry, for example at film festivals. It would also allow executives to change different parameters of a film/TV program to see how this affects performance.

However, the ability of AI solutions to accurately predict a film or TV program’s level of success has limitations. Models rely on historical data, which means predictions are based on what has pleased audiences in the past rather than what could ignite them going forward. Solutions can also struggle to capture culture differences and can predict outcomes that are obvious, for example that having a hot A list actor in a lead role will boost engagement.

AI is enhancing advertising on multiple fronts

Linear TV is an established and trusted advertising channel, while online video platforms are seeing rapid growth in advertising revenues, as shown in Figure 5. Ad-funded streaming services are going to be increasingly important as video streaming matures and content owners look to expand viewership outside of subscription models. But TV and video advertising is becoming more complex and challenging – a situation that will only magnify going forward. Issues include data deprecation as well as audience and device fragmentation, all of which make attribution and measurement harder. The good news is that AI can help, by making TV advertising more effective and engaging, with key means including immersion, advanced analytics, automation, and metadata tagging.

AI supports interactive advertising experiences

Millennials and younger generations in particular are drawn to interactive, immersive digital content, and will gravitate to digital advertising that has similar qualities. TV advertising may not be renowned for interactivity, but this can be helped by AR. AR graphics can be used to provide users with additional information about products featured in a video in ways that are highly interactive, be it in the form of a companion AR TV app or an online AR shopping experience associated with a program. Bravo TV, owned by NBCUniversal, did exactly this with its Virtual Bravo Bazaar AR shopping mall (online and mobile app) that gave fans an immersive way to virtually explore and buy products featured in popular Bravo programs like Below Deck, Southern Charm and Family Karma (among others).

Creative support

AI can assist advertising creative outputs in a similar way that it can be used to support the production of TV and film trailers. Algorithms can be trained on existing advertising campaigns, copy, and other inputs in order to identify winning combinations and recommend iterations that can support a new ad campaign. AI can also help make advertising more accessible with auto subtitling and advanced dubbing, just as it can for film and TV content formats.

Advanced analytics

- AI improves addressable TV advertising. Algorithms can interrogate and surface patterns at speed and scale from large, diverse data sets that can be used to create more accurate audience identification and enable more effective customer segmentation modelling. This enables advertising to be tailored for different viewer segments. This is particularly important in a climate of tightening data privacy regulations, walled gardens and third-party cookie deprecation that is making it harder to understand and reach consumers. Even service providers and brands with deep reserve of first party data will need solutions of this kind, as although first party data is valuable it does not provide a full picture of audience preference and will not help in the identification of new customers.

- ML and augmented predictive modelling can be used to determine changes to advertising/promotional campaigns that will improve performance against specified performance objectives.

Automation

- Streamlining workflows. Advertising workflows are becoming increasingly complex with the growth in online video platforms and the proliferation of connected devices for consuming video. AI automation can help manage and streamline advertising workflows including forecasting and reporting, creative asset management, and frequency planning (among other things).

- AI can help improve multi-platform advertising. AI automation can distribute advertising to TV platforms and connected devices where consumers are most likely to act on advertising messages. This will be particularly useful in the context of streaming video services.

- Better programmatic performance. Programmatic advertising is, by nature, highly automated and already relies on AI to varying degrees. But advances in ML, deep learning (DL), and neural networks can take programmatic advertising to the next level, by further optimizing ad impressions in the real-time bidding process.

Metadata tagging

- Ad insertion and product placement. Algorithms can identify markers in videos that are suitable spots for an ad insertion and/or product placement, and then insert the ad in question. Markers can include structural elements like precise time codes or shot change boundaries or contextual elements such as a scene/activity/mood that best resonates with the brand values or conversely, identifying those that do not.

- Brand imprints. Content tagging can help brand owners to measure the level of exposure achieved by a product placement or sponsored item in a video or live broadcast. For example, for a brand sponsoring a sports event content tagging enables them to see exactly when, where and for how long player shirts or billboards featuring their logo were visible and prominent in a game.

- Metadata tagging. This can be applied to the content of an ad, and the information used to select ad that are the best contextual fit for media inventory. For example, tagging can be used to extract the appropriate ad from a server for a slot in sports programming.

AI is shaping next-generation shoppable TV

Shoppable TV/video describes experiences that enable viewers to purchase products featured in TV programs and videos. The basic premise is not new, as shopping channels like QVC have been around for years, while certain broadcasters like Comcast-owned NBCUniversal and Sky are using QR codes to drive shoppable advertising. But the nature of TV shopping is changing, with deeper TV e-commerce integrations and a more seamless experience driven by a convergence of forces including increased uptake smart TVs (see Figure 6) and AI solutions. An important development is the use of automated metadata tagging to identify and label products that appear in a video. Viewers can be notified when a featured product is available for purchase, enabling them to find out more about the product and, if they like it, purchase the item. This is the approach behind a shoppable TV experience UK broadcaster ITV introduced in 2021 for its popular reality TV show Love Island.

- Processing even a simple payment can involve multiple steps and significant human intervention at the back end, but AI can streamline the process by automating workflows and providing decision support.

- AI can streamline identity verification by using various biometric solutions including fingerprint, facial, and iris recognition.

- ML solutions can be used to detect fraudulent activities on transactions in real time, for example by monitoring data patterns and behavior to detect anomalies that are indicative of potentially fraudulent activity.

The role of voice shopping: still a way to go

A voice interface can be used to make payments directly or via an AI voice assistant controlled by the user. In Omdia’s 2021 Consumer AI survey the majority of respondents used their AI assistants for shopping activity to some degree, as shown in Figure 7. However, there are hurdles to clear before voice shopping takes becomes mainstream and a basic issue is that many people are not comfortable using their voice to make payments, as cited by 48% of respondents in the survey. Lack of trust is another deterrent: 32% of respondents didn’t trust the security payments made via a voice assistant, 29% worry that the assistant will not understand them and buy the wrong things.

- AI in TV and video production is about augmenting human teams. AI solutions can carry out routine procedures and time-consuming tasks, freeing up teams to focus on more high value, creative activities.

- AI-powered subtitles and dubbing enhance viewing experiences and create new opportunities. AI is driving improvements in the quality of subtitles and language dubbing, services that are valued by viewers. Subtitles and dubbing make content more accessible to those with aural and visual challenges, while opening new monetization windows by taking original language content to international audiences.

- Use automated metadata tagging to support content compliance. Content is often under subject matter restrictions, and rules can vary by country, region, and even according to environments (e.g. content distributed to airlines). ML algorithms can be trained to identify and tag content that is subject to unique restrictions, with the rules flexed according to different compliance scenarios.

- Experiment with interactive formats. This includes augmented reality (AR) TV applications and audience-driven narrative interactivity where viewers can alter the plot narrative, creating branching story lines and endings. Nascent as they may be today, initiatives of this kind deepen engagement. Although consumer Virtual Reality (VR) has potential it remains a niche industry at present, not just in terms of addressable audiences but also content revenues.

- Use AI-driven insights to sharpen business decisions. Advanced analytics can fine-tune existing segmentations or reveal new clusters, with models dynamically updated based on changing behaviors. This supports multiple business imperatives, including better designed and targeted video service bundles. AI can also improve propensity modeling that can provide insights into the type of content and features that will appeal most to customers as well as their propensity to churn.

- Put advanced content metadata tagging to work. Automated metadata tagging can label video content at speed, scale and with great accuracy. It enables a growing range of use cases, including automated clip generation, accurate placement of viewing prompts (e.g. “Skip intro”, “Watch next”) along with multiple advertising scenarios such as enhanced ad insertion and product placements.

- Double-down on discovery. Video content libraries are getting bigger, magnified by super-aggregation models. Consumers can be overwhelmed to the point of option “paralysis.” Metadata tagging that’s more granular improves the accuracy of search and the relevance of recommendations. Alongside these benefits, AI techniques enable a better understanding of the intent behind search queries. Voice search is proving its potential – for many consumers, asking for information can be faster and easier than navigating menu systems.

- Leverage AI to make services more inclusive. Voice and gesture control, and the eye tracking interface both make video services more accessible. Voice for those with visual challenges, gesture where verbal commands could be an issue and eye tracking in cases of severe disabilities. Alternative interface can be viewed as niche, but many pave the way for broader innovation in the way that voice control is becoming a mainstream product.

- AI can be an assistive tool for ad campaign creation. It is not only film and television production that benefits from AI. ML algorithms can be trained to identify and recommend winning creative elements, combinations, and outputs to support campaign ideation and creation. Alongside this, AI-enhanced subtitles and dubbing can make advertising more accessible, while AR can make advertising formats more interactive.

- AI can help move the needle on addressable advertising. AI-augmented analytics enable more precise customer segmentation modelling. This improves the performance of personalized advertising at scale, enabling the creation of different advertising messages tailored for different audience groups. This is particularly important in a climate of increasing data privacy regulations, walled gardens, and third-party cookie deprecation that is making it harder to understand and target consumers.

- Look to AI to assist with increasingly complex advertising workflows. AI-driven automation can help manage and streamline advertising workflows, including forecasting and reporting, frequency planning, and creative asset management. AI automation can also assist multi-platform advertising by distributing ads to TV/video platforms and connected devices where consumers are most likely to engage.

- Direct metadata tagging to advertising content. Metadata tagging can be applied to the content of an advert, and the information used to select adverts that are the best contextual fit for media inventory. For example, tagging can be used to extract the appropriate adverts from a server for a slot in children’s programming.